Trust is the license to operate in financial services. When AI touches credit, risk or customer data, every output must be defensible to an auditor, a supervisor and a client. That is the starting point, not the afterthought.

In a recent Bank of England and FCA survey of firms using or planning to use AI over the next three years, 46% reported having only a ‘partial understanding’ of the technologies they deploy. For leaders navigating AI adoption, this isn’t just a statistic — it raises a critical question: how do we build systems that stakeholders can trust?

To earn trust, FIs need full transparency: clear data and model lineage, strong controls, and rigorous oversight across the entire AI stack — including the vendors they partner with.

Since accountability is non-negotiable, explainability and control cannot be optional—they must be measurable. Model risk leaders see the same pattern. The Bank for International Settlements (BIS) warns that weak explainability and opaque model behaviour make model risk management harder, especially with complex machine learning and large language models. Boards and risk committees respond by asking for clearer evidence and accountable control. The question is no longer whether a model performs. It is whether the organisation can prove how it performs, for whom, and under what guardrails.

When outcomes define success, controls cannot vary by tool—they must meet a consistent, rigorous standard. Global policy bodies align on the principle. The Organisation for Economic Co-operation and Development (OECD) analysis shows financial regulation remains technology-neutral and outcomes-focused. That means AI systems must still meet standards on model risk, data quality and operational resilience. In practice, the fundamentals that sustain confidence in finance do not change because the tooling changes. They become more visible and more testable.

Weak controls prevent value from compounding—it simply evaporates. The economics of trust are just as clear. Gartner forecasts that over 40% of agentic AI projects will be cancelled by the end of 2027 due to inadequate controls, unclear business value and escalating costs. These numbers explain a simple truth. Trust determines whether investment compounds or stalls.

And if trust fails, the bill arrives in real money, not abstractions. IBM’s latest breach study places the global average at $5.56 million per incident in financial services. That is why data governance, lineage and residency are not IT preferences. They are core risk choices with a direct impact on the P&L.

In this landscape, trust is more than transparency or neat dashboards. Trust is verifiable lineage, accountable governance and continuous oversight that withstand scrutiny at scale. Without it, AI is a liability. With it, AI becomes infrastructure.

The gap exists because many programs stall after flashy demos. Common issues include:

Data quality: According to Deloitte’s 2024 Banking & Capital Markets Data and Analytics Survey, 81% of respondents cite poor data quality as a top challenge.

Opaque reasoning: Outputs become unreliable when models guess due to thin data and lack a clear chain of evidence.

Security and compliance risks: IBM’s 2024 Cost of a Data Breach Report shows the global average breach cost for financial institutions to be 22% above the global average. Privacy and data-residency constraints make cloud-only tooling increasingly risky.

Domain gaps: Generic models don’t understand Probability of Default (PD), Loss Given Default (LGD), Exposure at Default (EAD), covenants, and sector nuance.

Weak governance: Role-Based Access Control (RBAC) is incomplete, logs are partial, human checkpoints are unclear, and challenger models are absent.

The result? Outcomes are unpredictable. Investment gets questioned. Trust erodes.

High-stakes credit decisions need systems that start with verified inputs, continue with explainable reasoning, and end with accountable human oversight. Generic chat assistants are useful for notes, drafts and summaries of public information. Credit decisions cannot tolerate guesswork. Large Language Models can guess. Financial grade agents verify. That distinction matters.

At Galytix (GX), we build AI agents for Credit and Risk teams that are designed for accuracy, auditability and governed deployment. Agents like CreditX plan tasks, call the right tools, cross-check results, and escalate to an analyst whenever confidence drops below thresholds set by the institution.

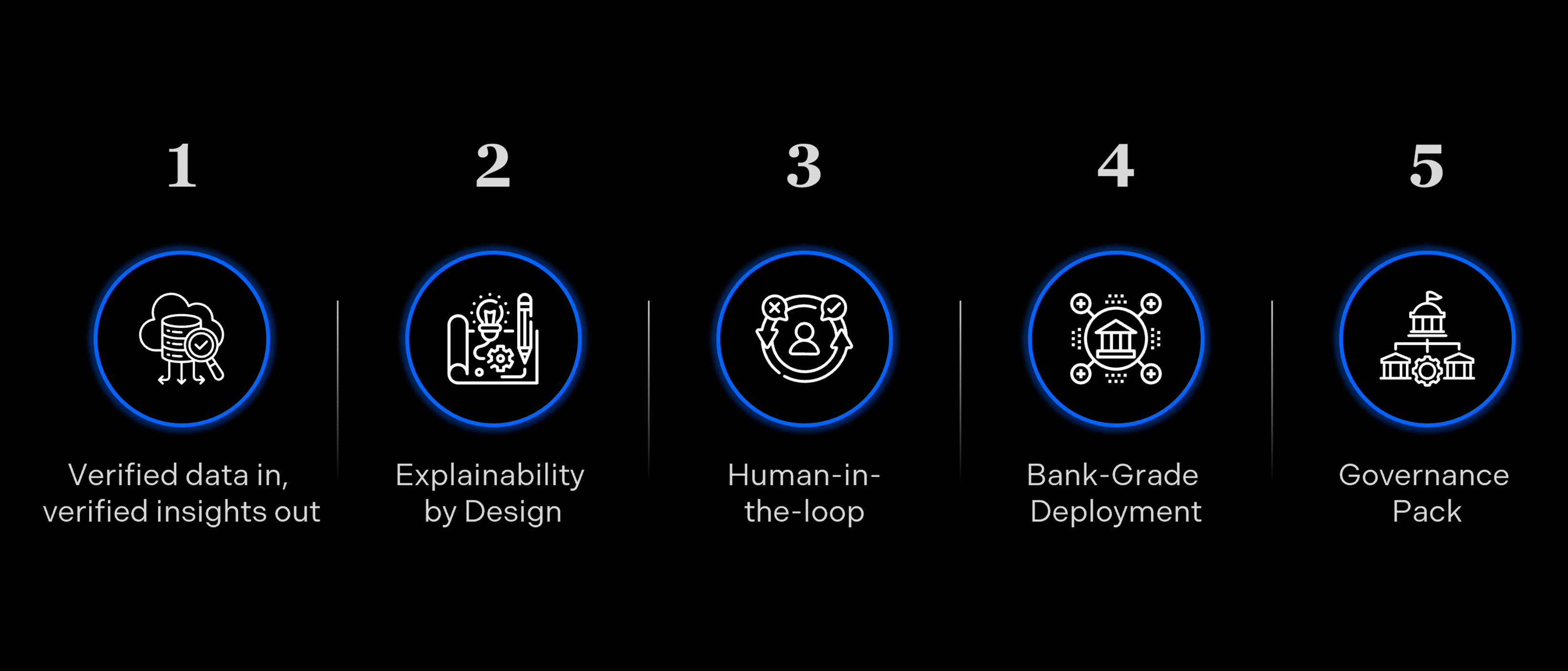

What makes us different:

Verified data in, verified insights out: Deterministic ingestion of filings, statements, PDFs, and contracts. Every claim cites its source.

Explainability by design: Reason codes, feature attributions, challenger models, and back-testing.

Human-in-the-loop: Analysts review and correct. The system learns from expert feedback.

Bank-grade deployment: On-premises or Virtual Private Cloud (VPC), Single Sign-On (SSO), Key Management System (KMS), full audit logs and granular permissions.

Governance pack: Documentation for model risk, data protection, and compliance to accelerate sign-off.

High-stakes credit decisions need verified inputs, explainable reasoning and accountable human oversight. Here is how that works, and how we prove it.

Intake and data: The agent pulls filings from verified sources, reconciles statements and flags gaps with full lineage.

What we measure: Lineage completeness, intake time, rework.

Impact: 20–30% faster decision cycles, fewer committee iterations.

Scoring and Reasoning: The agent explains its view with reason codes and references, drafts the memo and highlights where judgment is needed. Human review adds comments, overrides and corrections.

What we measure: Override rate, memo preparation time, explainability coverage.

Impact: 100% policy adherence, fewer audit exceptions.

Continuous Oversight: Back tests compare outcomes, challenger models benchmark performance and alerts surface drift or unusual overrides. Every decision has lineage, and every change has an owner.

What we measure: Drift alerts, time to resolution, audit readiness.

Impact: 80–90% automation in credit analysis.

Guarded deployment: On premises or virtual private cloud with single sign-on, key management, audit logs and granular permissions.

What we measure: Access governance, residency compliance, security events.

Impact: Faster sign-off and reduced breach exposure.

Regulatory alignment: Ensuring 100% compliance via consistent application of risk-based approaches.

What we ensure: Adherence with GDPR, AI regulations and information security standards are met.

Impact: Comprehensive documentation for financial institutions and reduced risk of data leakage.

Baseline before pilot, instrument during rollout, and report after go-live with tracked metrics, reviewer logs and timestamped evidence. Trust that is measured becomes policy.

Galytix removes the trust barrier for Credit and Risk teams. Our agents deliver verified data, explain every step and keep humans in the loop. They run on FI-grade infrastructure and deliver measurable investment returns.

Beyond a tagline, Trusted AI is a discipline that compounds confidence. That’s when investment pays off. That’s when your teams win.

Ready to see it in action? Contact us for a demo.

Try CreditX Now

Sources:

Gartner (Agentic AI project failures), Gartner, Inc. (2025, June 25). Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027. https://www.gartner.com/en/newsroom/press-releases/2025-06-25-gartner-predicts-over-40-percent-of-agentic-ai-projects-will-be-canceled-by-end-of-2027

McKinsey & Company (Widespread AI experimentation), McKinsey & Company. (2025). The state of AI in 2025. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-2025

Deloitte (AI embedded in workflows), Deloitte. (2024). AI adoption and value realization in the enterprise. https://www.deloitte.com/global/en/our-thinking/insights/topics/digital-transformation/ai-adoption-and-value.html

Edelman (Trust and AI adoption), Edelman. (2024). Trust and the future of AI. https://www.edelman.com/trust/2024/trust-and-the-future-of-ai

Bank of England & Financial Conduct Authority (AI in banking), Bank of England & Financial Conduct Authority. (2025). Artificial intelligence in UK financial services. https://www.bankofengland.co.uk/report/2024/artificial-intelligence-in-uk-financial-services-2024

IBM Security (Cost of data breaches), IBM Security. (2024). Cost of a data breach report 2024. https://www.ibm.com/reports/data-breach

Deloitte (Banking & Capital Markets data survey), Deloitte. (2024). Banking & Capital Markets data and analytics survey. https://www.deloitte.com/global/en/industries/financial-services/analysis/banking-capital-markets-data-analytics-survey.html

BIS (AI explainability & model risk), Bank for International Settlements. (2025, September). Occasional Paper No 24: Managing explanations – how regulators can address AI explainability. https://www.bis.org/fsi/fsipapers24.pdf

OECD (AI regulation in finance), Organisation for Economic Co operation and Development. (2024, September 5). Regulatory approaches to artificial intelligence in finance (OECD AI Papers No. 24). https://doi.org/10.1787/f1498c02